Project Neverland

Team TheatAR is a student pitch project at Carnegie Mellon's Entertainment Technology Center (Fall 2018) that is creating Project Neverland. It aims to create a theatrical experience in which a live actor and an animated character can share the same stage, in realtime, using augmented reality.

I’ll be focused on my contribution and process here, see our team website for the more complete process.

First… the amazing team

Raisa Chowdhury: Set Designer

Sudha M R: Programmer

Euna Park: 3D Animator and Co-Producer

Cassidy Pearsall: Theatrical Production Manager

Apoorva Ramesh: Programmer

Daniel Wolpow: Creative Director and Producer

After a lot of iteration and experimentation with different kinds of hardware, we chose a story that would complement the strengths and weaknesses of wearable augmented reality as well as make a good case for the use of the technology in a theatrical setting. To meet those ends, we chose to recreate the nursery scene from Peter Pan. Peter and Wendy will be live actors, and Tinkerbell will be an animated augmented reality character. We are aiming to have Tinkerbell act in a believable way with both the physical set, and the actors.

After considering several possible Tinker Bell models, we considered character design and the inclusion of a game engine-ready rig for animation. We purchased a fairy model and outsourced biped and facial rigging to the amazing Sahar Kausar, so that I was free to collect reference videos and hammer out the animation pipeline between creating acting sequences in Maya, while moving and plotting the character route in Unity.

Gold Spike - Technical Pipeline Iteration

A ceremonial gold spike was used to connect the first transcontinental railroad in the United States. This is why at the ETC, we use the term “Gold Spike” to signify that we’ve reached a working technical pipeline.

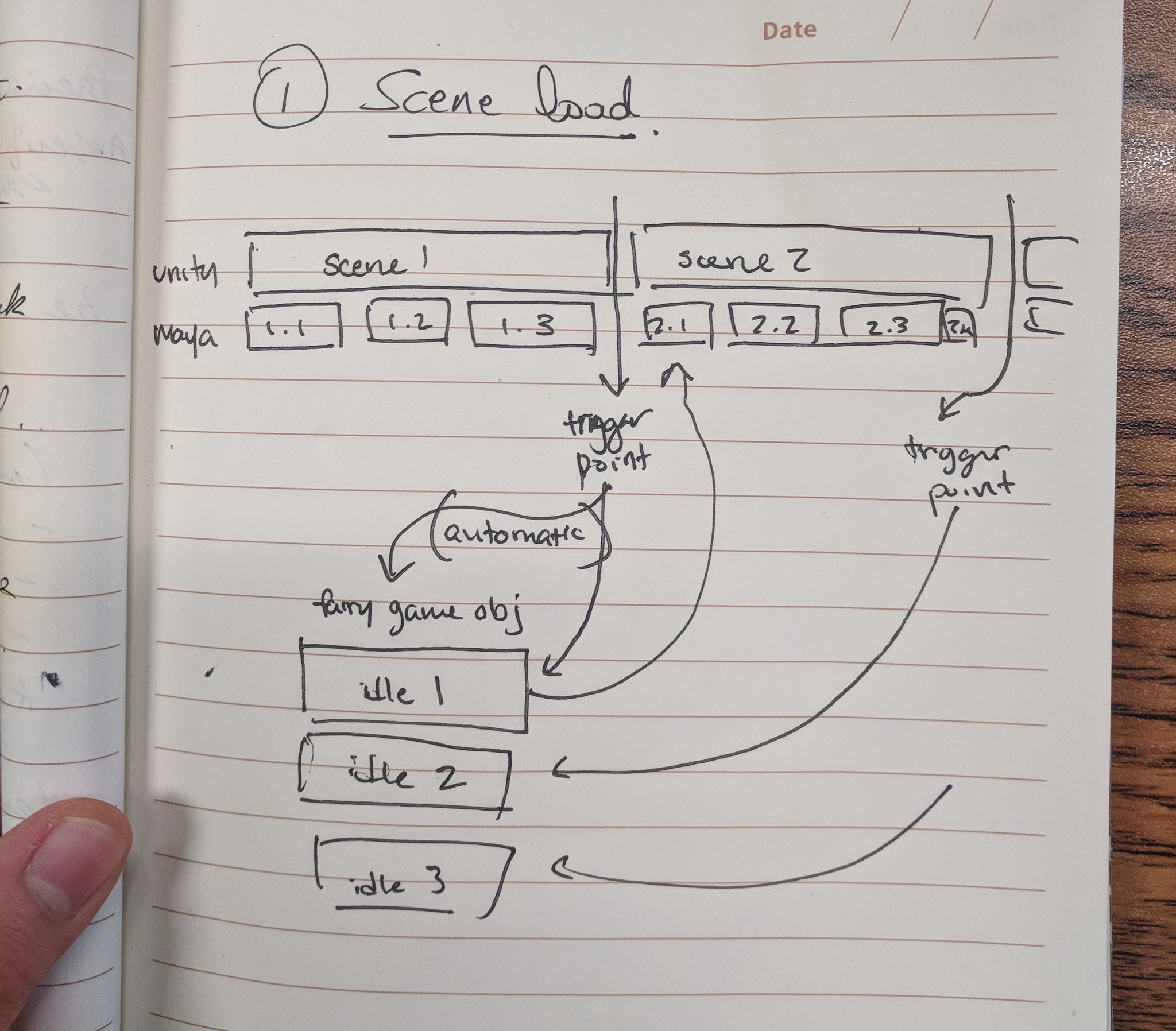

This pipeline was a collaborative process between me and our team’s programmers because how the animation assets will be created, organized, and manipulated in both Maya and Unity are my responsibility. Using sketches like the one shown, programmers and I communicated in meetings and back and forth.

Using a Kiel Figgins rig that I had for personal practice, I made a sequence of animations in Maya, which we then put into Unity Timeline.

A hover

A transition

A flight

We put them into the engine and added Unity movement in Timeline. So far, this has worked out with test animations.

A Gold Spike is our term for a small demo to prove the pipeline works, so it doesn't get in the way of our project.

Animation Preparation

When the story was chosen and the stage was still underway, reference video was studied from hummingbirds, indoor skydivers, and Disney’s 2D and 3D Tinker Bell renditions. In this way, an animation style could be prepared as an aesthetic target while the specifics of the pipeline were being ironed out.

The team spent some time at the space together and discussed props and production for practical effects before mapping out the space. We taped off the area and put in temporary placeholders where we would have our props.

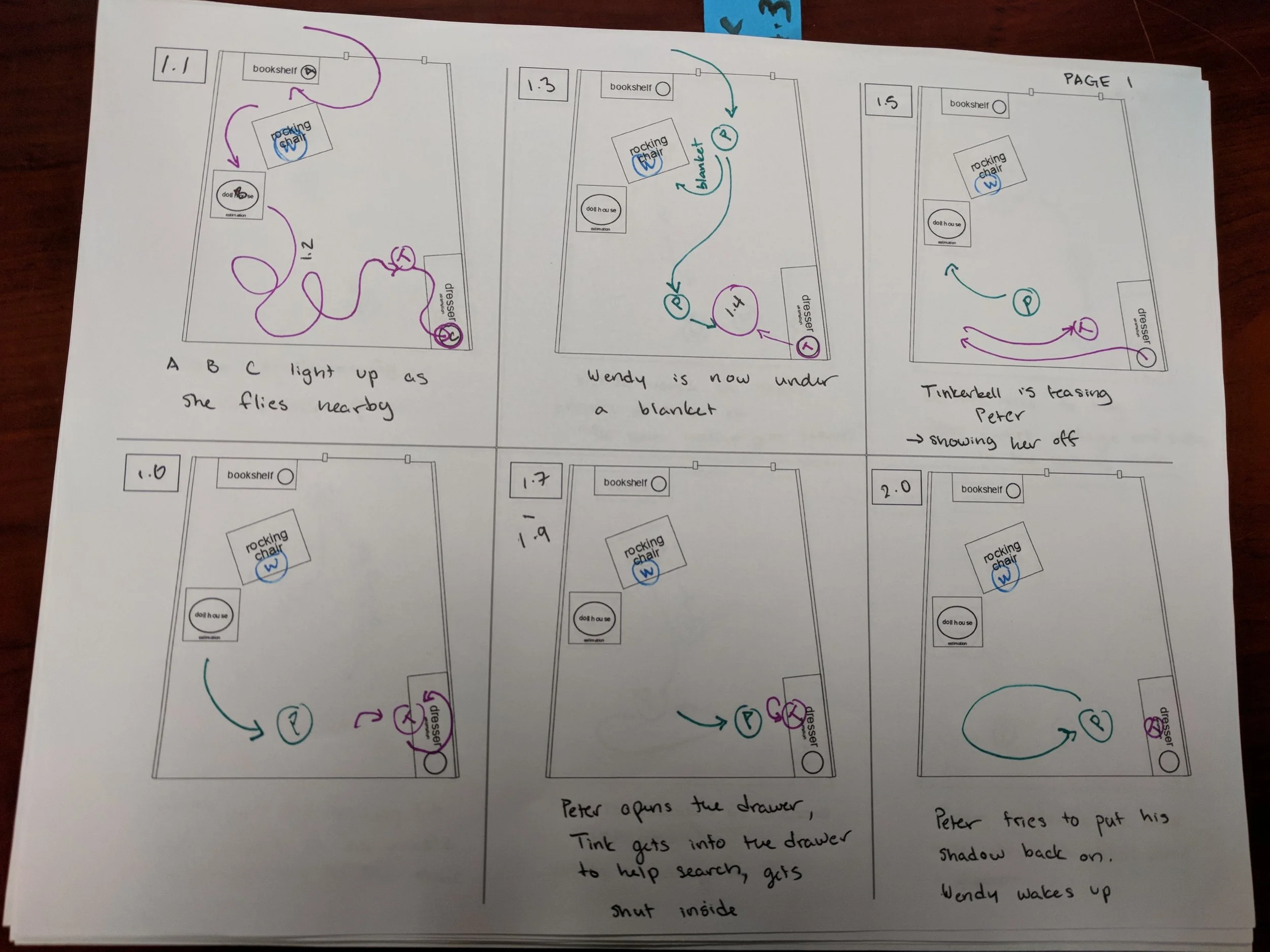

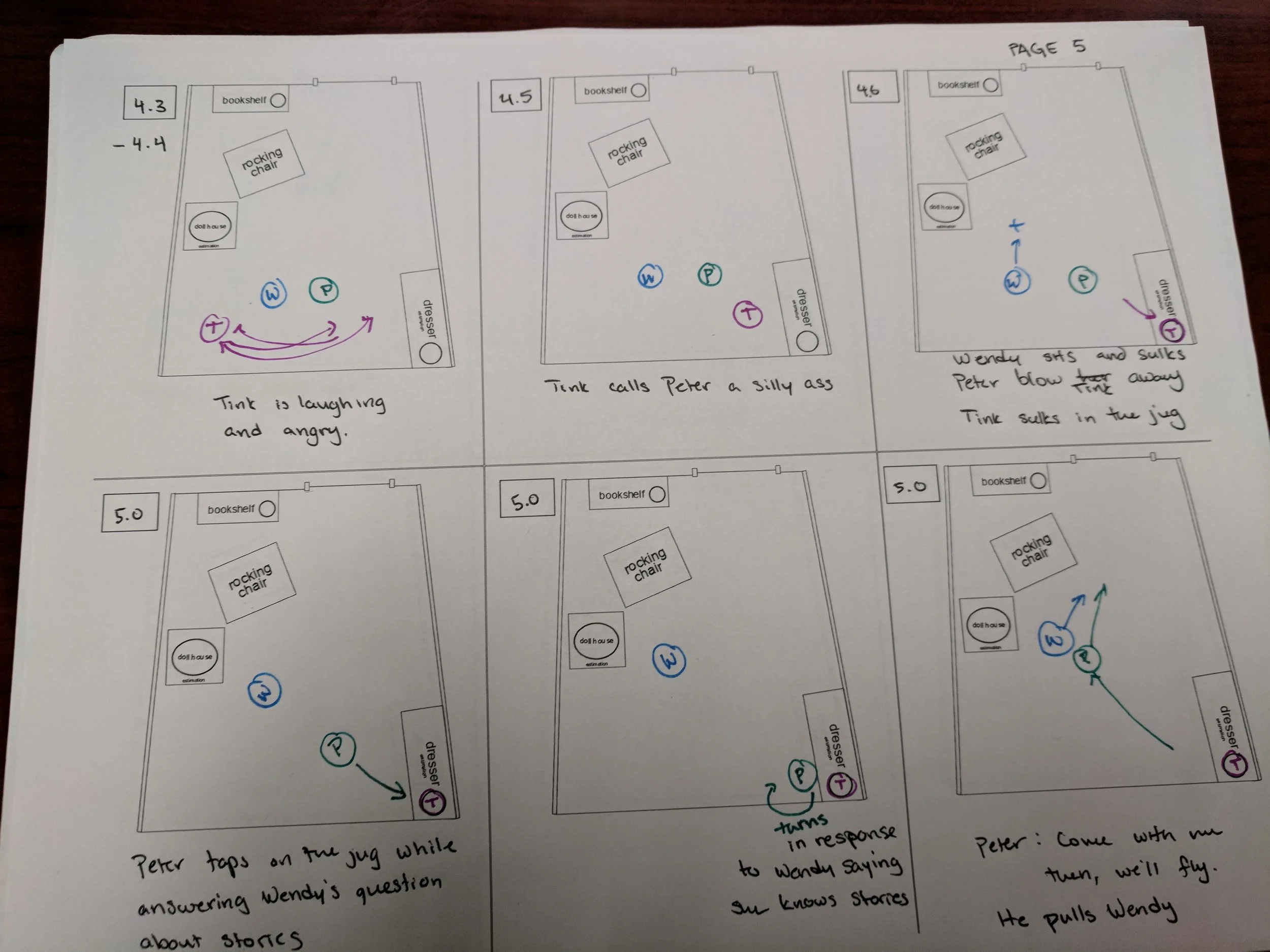

Our Theatrical Manager took these measurements and put them into a schematic, which I took and turned into a template for our storyboarding.

Once the script was complete and the first blueprint of the stage was given, we storyboarded the blocking for the actors and for Tinker Bell from a top-down view to get a good idea of where Tinker Bell’s cues needed to be, and where Tinker Bell could be in relation to the actors at any given time. We needed to keep in mind that for the most part, Tinker Bell would appear in front of the actors because the digital set replica only accounted for the props and furniture.

Animation Cueing System

Tinker Bell’s animation pipeline posed a particular challenge. Faced with the task of creating close to 2.5min of animation in 3.5 weeks, we made a list of what the pipeline needed.

Flexibility - Animation implementation needed to accomodate rehearsal notes on a day-to-day basis

Variety - A number of movement variants needed to be produced in a short period of time

Human input - Cues needed to be manually delivered in order to

For her performance, she could not be completely animated in either Maya or Unity if we were to retain iterative flexibility within our scope and asset management, and her performance also needed to be relatively dynamic in order allow our actors to act freely. An unusual pipeline was developed in collaboration with our programmers and creative director, specifically for this project. Animation production resulted in an interesting role reversal of software; her flight path in Unity was treated like a cinematic animation done in a continuous sequence, and her body was animated in Maya in short gameplay-like cycles. These cycles would be layered on top of the flight path in Unity, and they could be played at the same time.

Maya Animation

In order to create animation as efficiently as possible, only two Maya files were maintained. One was made for locomotion, and the other was made for acting. There was a baseline idle made in each one, and then necessary animations were created in layers. In this way, elements like wing flutter and y-axis movement could be preserved.

These clips were exported into Unity, and incorporated into Unity’s Timeline feature. Maya clips were all integrated into their own track, and the flight path was animated in Unity itself, on a separate track.

Unity Animation

As the earlier prototypes were completed in Unity to nail down a ‘gold spike’ in the implementation process into Unity’s system, we built on that to further develop Tinkerbell’s performance animation. In order to have Tinker Bell feel as though she was constantly moving, even while she was waiting for a cue, we made use of Unity’s Timeline feature to orchestrate this process. Cues were scripts that were placed on activation tracks in Unity’s Timeline, which froze the Timeline and moved Tinker Bell’s animation to a state machine with specified idle moments for Tinker Bell’s mood at that point in the script.

There were a few goals that we were not able to hit for the way we handled animation in Unity; we realized that the cue markers needed to be bookended by idle states, and entering the state machine which handled Tinker Bell’s animation while waiting for the next cue. This was necessary because we did not have time to figure out a way to blend Tinker Bell’s animation in and out of cues smoothly while also using the Timeline in an older version of Unity (2017.4), so this was a solution which produced the smallest noticeable snapping between animation clips because she was in relatively the same position.

Halves Presentation - Week 9

After some debate, we decided to present a live demo at our Halves Presentation, which was closer to the 2/3 mark of the way through the semester.

Following some feedback and critique, we made some changes. We initially adjusted Tinker Bell’s dress textures to the color of autumn leaves while keeping the story (the original JM Barrie script) in mind, but we ended up pushing the color of her dress to be a saturated pink because we had issues in which either her body wasn’t appearing clearly in the hologram produced by the HoloLens, or the golden color was appearing too close to her skin tone and giving the impression she was unclothed. (Before and after color adjustments are shown below.)

Cueing Errors - Hololens Clicker Reliability

The biggest hurdle to our cueing system was that the HoloLens clicker was not completely reliable. We could expect that its failure to respond would occur at least twice per performance; however, we had no way of predicting on which cue this would happen. Our actors were incredible, and as we ran through rehearsals, they ended up adapting small improv moments for every cue to make sure that the energy of the performance never game to a standstill. To make sure that the light and sound cueing system for the actors never went ahead of the animation, we saw in the back of the theater. When cueing animation, I relied on seeing Unity’s timeline progress in a text box integrated by our programmers into the master build, not whether I had pressed the clicker.

When I saw a cue worked, I would squeeze the Physical Interaction Designer’s hand so she would start the accompanying light/sound cue for that moment.